Meta Ad Account Structure: Explaining It to Your C-Suite

The three-level hierarchy

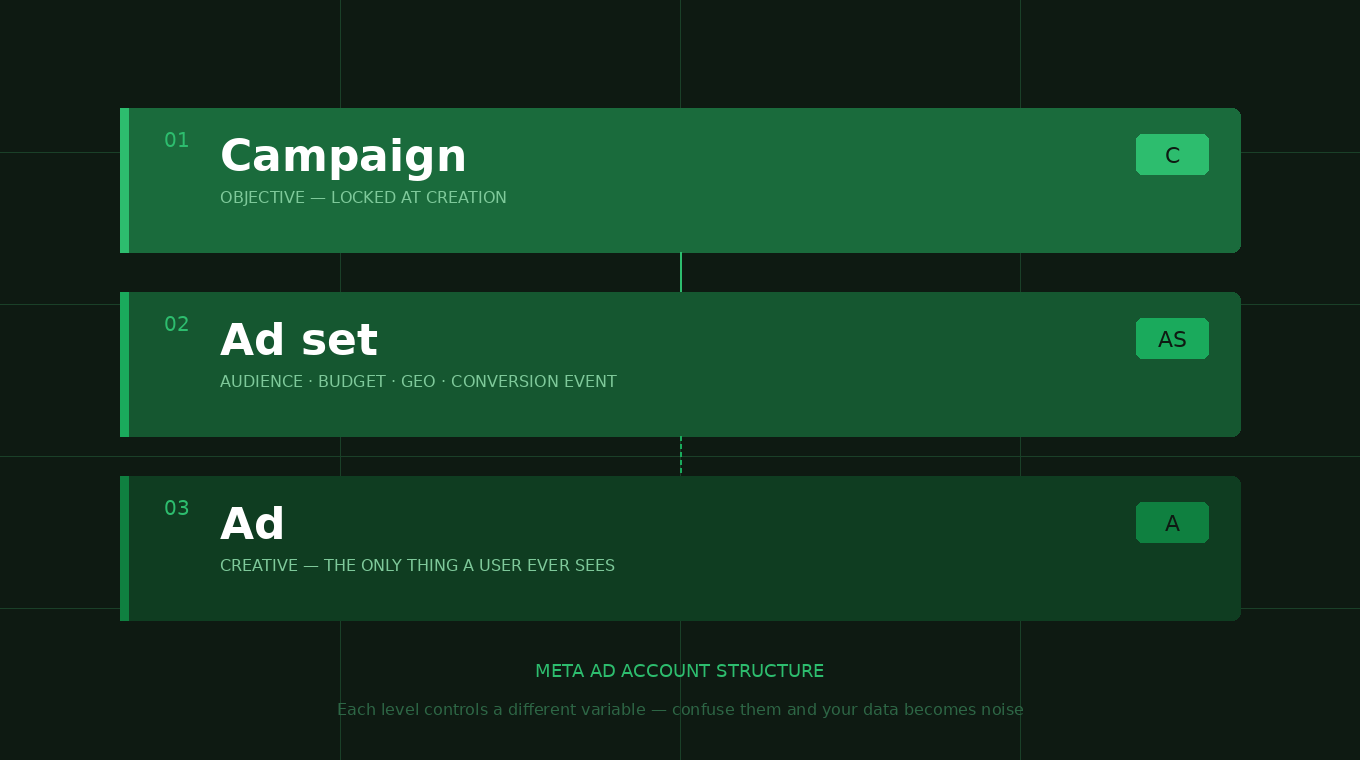

Every Meta ad account runs on a three-level structure: Campaign → Ad Set → Ad. These aren’t just organizational folders — each level controls a completely different variable. Confuse them and your data becomes noise. Most accounts I inherit have all three layers collapsed into one decision. Wrong objective, no audience separation, creative mixed in with targeting. The result is activity without insight.

“The campaign tells Meta what you want. The ad set tells Meta who to show it to. The ad is what people actually see. Confuse these layers and you’ll never know which variable is driving results — or killing them.”

Level 1 — Campaign: locking in the objective

The campaign level does one thing: it locks in your objective. This is the most consequential decision in the entire account because Meta’s algorithm optimizes for exactly what you tell it to. Tell it leads — it finds form-fillers. Tell it purchases — it finds buyers. Wrong objective means wrong traffic, regardless of how strong everything else is.

This is also the level I see burned most often by previous vendors. Running branded campaigns disguised as lead generation. Optimizing toward link clicks when the goal is form submissions. The platform follows your instructions precisely — so you need to give it the right ones.

Optimizes toward purchase events. Every dollar directed at people most likely to transact. Requires pixel purchase data — without it, the algorithm is guessing.

Pulls live product data from your feed and dynamically assembles ads. Meta matches products to people based on browse history and intent signals.

Drives to a landing page and optimizes for form completions. Requires a configured pixel and conversion event. Higher intent, more friction.

Form lives inside Meta. Lower friction for the user, higher volume, typically lower intent. Strong for service businesses at top of funnel.

Level 2 — Ad sets: the separation layer

This is where most ad accounts fall apart. The instinct is to throw everything into one ad set and let Meta sort it out. We don’t do that. Each ad set is a contained unit — one audience, one geography, one budget.

When audiences compete inside the same ad set, Meta biases toward the easier-to-reach pool. You end up with a “winner” that isn’t better — it just had more available reach. Separate ad sets means separate signals and decisions you can actually act on.

Two markets — even in the same metro — have different competition levels, CPLs, and customer profiles. Lumping them together blurs every metric. You can never answer “which market is actually working?” when they share a budget.

Lookalike audiences, Advantage+ broad, interest segments, and retargeting pools all convert at different rates and costs. One ad set means you’ll never isolate which bucket is driving results — or quietly draining budget.

This trips people up constantly. The ad set is where you tell Meta which specific action to optimize toward: a form submission, a purchase, a custom qualified lead event. This directly controls the bidding mechanism.

Level 3 — Ads: dynamic vs. non-dynamic

The ad level is the creative testing layer. How you structure your ads determines how you test — and whether your results are usable. The dynamic vs. non-dynamic decision is a strategic one that determines what you learn.

The constraint to plan around: Dynamic ad sets hold one ad. That one ad carries up to 10 creatives, 5 headlines, 5 primary texts, 5 descriptions — Meta tests combinations automatically. What you lose is cross-service-line comparison. Interior painting vs. exterior painting need separate dynamic ad sets so you’re comparing apples to apples. And yes — every edit to that single ad risks resetting learning. Have a plan before you touch it.

Real accounts — how this plays out in practice

Here’s a live look at three accounts. Every campaign, ad set name, and creative decision maps back to a deliberate testing hypothesis. Client names are omitted. Data is April 2026.

The naming convention — your account’s data taxonomy

The naming convention maps directly to the three-level hierarchy. Campaign names encode the objective and service line. Ad set names encode the geo, audience type, and format. Ad names encode the creative variant. Done right, you can read a full campaign/ad set/ad name and immediately know: what are we optimizing for, who are we targeting, how are we targeting them, and what creative are they seeing.

This is diagnostic infrastructure. When CPL spikes at 7am and you’re filtering 40+ ad sets in Ads Manager, the name is your first triage tool. One glance should tell you whether the issue is at the audience layer, geo layer, or creative layer.

Why we test everything — and what we’re actually looking for

Here’s the thing about Meta advertising that most people get wrong: assumptions don’t scale, data does. Every client we onboard has a hypothesis about who their best customer is, what message resonates, and which creative stops the scroll. Sometimes they’re right. More often, the data surprises everyone — including me.

The structure we’ve built exists to surface those surprises cleanly, quickly, and without burning through budget. Testing isn’t a phase at launch. It’s the operating model.

Different objectives unlock different bidding algorithms. Running Leads and Catalog Sales simultaneously — with separate budgets — tells you whether your product is better served by intent-driven or volume-driven traffic. You don’t know until you run both. In the ecomm account, Catalog Sales at $0.25 CPC completely changed the budget allocation away from leads campaigns at $0.70+.

Meta gives you multiple audience methods: broad Advantage+, lookalikes from different seed lists, interest targeting, demographic overlays. Each buckets a different type of person. The prospect who responds because they’re in a home improvement interest group is a completely different lead than someone who looks like your last 100 booked clients. Different CPLs, close rates, job values downstream.

Dynamic lets Meta test copy and creative combinations automatically — and you CAN surface winners within that ad (10 creatives, 5 headlines, 5 primary texts, 5 descriptions). Non-dynamic gives you exact isolation. The key: if you have multiple service lines, those need separate dynamic ad sets — otherwise you’re not comparing apples to apples.

Audience performance data — last 30 days

Here’s what structured audience testing actually produces. Real CTR, CPC, and CPM numbers from three live accounts. The differences between audience buckets are not small. They’re the difference between a $0.82 CPC and a $1.33 CPC, between a 3.8% CTR and a 1.3% CTR. At scale, that delta is your entire margin.

A note on these metrics: CTR, CPC, and CPM are platform-level distribution signals — not revenue. They tell you how efficiently Meta is delivering your ads to your target audience. Lead quality, close rate, and downstream revenue live in your CRM. Use these numbers to compare audience buckets against each other within an account, not as absolute benchmarks across industries.

What this tells us — Affiliate Client

Advantage+ broad is outperforming every manually constructed lookalike on CTR — Meta’s algorithm finds better matches than any seed list I can build. The HHI income overlay at $0.82 CPC is the most cost-efficient audience in the account. The Qualified Leads LAL at $0.96 CPC is the strongest lookalike — built from the best seed. The education filter ran at $1.08–$1.33 CPC across two campaigns. Both rows underperformed Adv+ and HHI. Audience hypothesis ruled out, budget redirected.

What this tells us — Ecomm Client

Catalog Sales operates at a completely different cost structure — $0.25 CPC vs $0.70+ on leads campaigns. Catalog lets Meta match inventory to people with active purchase intent signals — the algorithm starts with a warmer pool. The HHI 10% income overlay is the worst performer at $2.34 CPC. Narrowing by income in a consumer product category tightened the audience without improving quality. Classic test that saved significant future budget by failing fast.

What this tells us — Service Client

The most expensive creative format — produced Selfie-style video — is the worst performer at $4.35 CPC. The unedited raw photo has the highest CTR in the account at 3.8% and a $1.06 CPC. Authentic, zero-production content is outperforming produced content by 4x on cost efficiency. You only know that because they ran as separate non-dynamic ads with isolated spend.

30-day spend distribution

Each bar is a distinct campaign with a distinct role. The largest concentrations track directly to the strongest-performing audience/creative combinations — not to the most recent launch.

The same creative hits differently across audiences

This is the insight most advertisers miss. A piece of creative doesn’t have a universal performance number — it has a performance number relative to the audience seeing it. The B-roll video in the Service Client’s Market B LLA ad set delivers 2.6% CTR at $1.02 CPC. That same video against a broad Advantage+ audience in Market A delivers 1.4% CTR at $1.67 CPC. Same creative. Different audiences. Completely different economics.

This is why we don’t “find the best ad” and scale it everywhere. We find the best ad/audience combination — and that combination is different for every market, every offer, and often every month.

The testing matrix — audience type × ad format

Before building any new campaign, this is the decision framework I apply. Every cell represents a hypothesis. The account structure’s job is to test those hypotheses without contaminating each other’s data.

Meta finds audience AND optimizes creative. Fastest learning. Use when you don’t yet know who your customer is.

Meta finds audience, you control creative. Tests whether specific offer language drives action at scale.

Known audience profile, Meta-optimized creative. Tests whether your best-fit audience responds to a variety of messages.

Known audience + known creative = cleanest test. Highest signal quality, highest overhead. Worth it at scale.

Does narrowing by income/age/education improve lead quality? Dynamic keeps volume high enough to reach significance faster.

Tests whether a specific demographic responds to a specific message. Validates or kills a persona hypothesis with real spend.

Dynamic vs. non-dynamic — when to use which

This isn’t a permanent decision — it’s a stage-of-testing decision.

New account, new offer, new market. You don’t have enough creative data yet. Dynamic lets Meta run the experiments while your budget goes toward finding winners — not funding a structured test you can’t yet design.

Dynamic has surfaced winning combinations. Now: what specifically is winning? Move top-performing copy + creative combos into separate non-dynamic ads. Now you know what to scale — and you know why. That “why” is what drives confident budget decisions.

Most established accounts. Dynamic ad sets continue finding new combinations and testing new audiences. Non-dynamic ad sets run proven winners at scale. The dynamic layer feeds the non-dynamic layer. One without the other leaves either performance or learnings on the table.

What happens when you don’t test

The alternative to structured testing isn’t “not testing” — it’s unstructured testing. Every dollar you spend is a test whether you design it that way or not. The question is whether you learn anything from it.

Without separate ad sets per audience, without creative isolation, without campaign type separation — you’re running an experiment with no control group. You’re getting results. You’re just not learning why. And without the why, you can’t replicate the wins or fix the losses.

I’ve worked with accounts spending $30K/month that couldn’t answer “which audience is driving qualified leads?” because everything was collapsed into one campaign. Restructuring those accounts — applying exactly the hierarchy in this article — consistently surfaces the same finding: 20–30% of budget is funding audiences or creative types that generate activity but not revenue. Testing isn’t overhead. It’s the most efficient use of ad spend you can make.

“We’ve seen accounts where the most expensive creative format — produced, polished, everything you’d expect to perform — is the worst performer by 4x. You only know that if you test them separately. That’s six figures in future budget decisions sitting inside a structure question.”

The bottom line: A well-structured Meta account isn’t about running more campaigns — it’s about generating cleaner data. Every structural decision exists to answer one question clearly: what is actually working, and why. That’s what drives scale — and that’s the standard we hold every account to.

Any questions on any of the above — don’t hesitate to reach out. And keep in mind, these are just the structure pieces. There’s still another 100 levers and buttons we can push and pull from here. Structure is the foundation. Everything else is where the real fun starts. 😄

— Drew

Ready to build an account structure that actually produces data?

If your Meta campaigns can’t answer “which audience is driving qualified leads?” — that’s a structure problem, not a budget problem. Let’s fix it.

Schedule a free consultation →